The Complete Guide to Deepfake Detection

Reading Time: 29 minutes | Category: Educational

In a world where seeing is no longer believing, the line between reality and digital fabrication has become dangerously blurred. Welcome to the era of deepfakes, a technology so powerful it can create convincing-yet-entirely-fictional video, audio, and images of real people saying and doing things they never did. This isn't science fiction; it's the new reality we all must navigate.

From multi-million dollar corporate fraud to political disinformation campaigns, the malicious use of deepfakes is exploding. Statistics show that deepfake-related fraud attempts spiked by 3,000% in 2023, and the number of deepfake files online is projected to reach 8 million in 2025, a 16-fold increase from 2023 [1]. The threat is real, it's growing, and it affects everyone.

This guide is your definitive resource for understanding and combating this threat. We will take you on a comprehensive journey through the world of synthetic media, covering:

- The History of Deepfakes: From academic experiments to viral internet phenomena.

- The Technology Explained: A simple, non-technical look at how deepfakes are created.

- The Societal Impact: Why this technology is one of the most significant challenges of our time.

- How to Detect a Deepfake: Practical, actionable tips and visual signs to help you spot fakes.

- The Technology Fighting Back: An inside look at the advanced systems being built to detect deepfakes.

- Protecting Yourself and Your Organization: Concrete steps you can take today.

- The Future of Digital Trust: What comes next in the battle for reality.

Whether you're a journalist, a business leader, an educator, or simply a concerned citizen, this guide will equip you with the knowledge to protect yourself and your organization from the growing threat of digital deception.

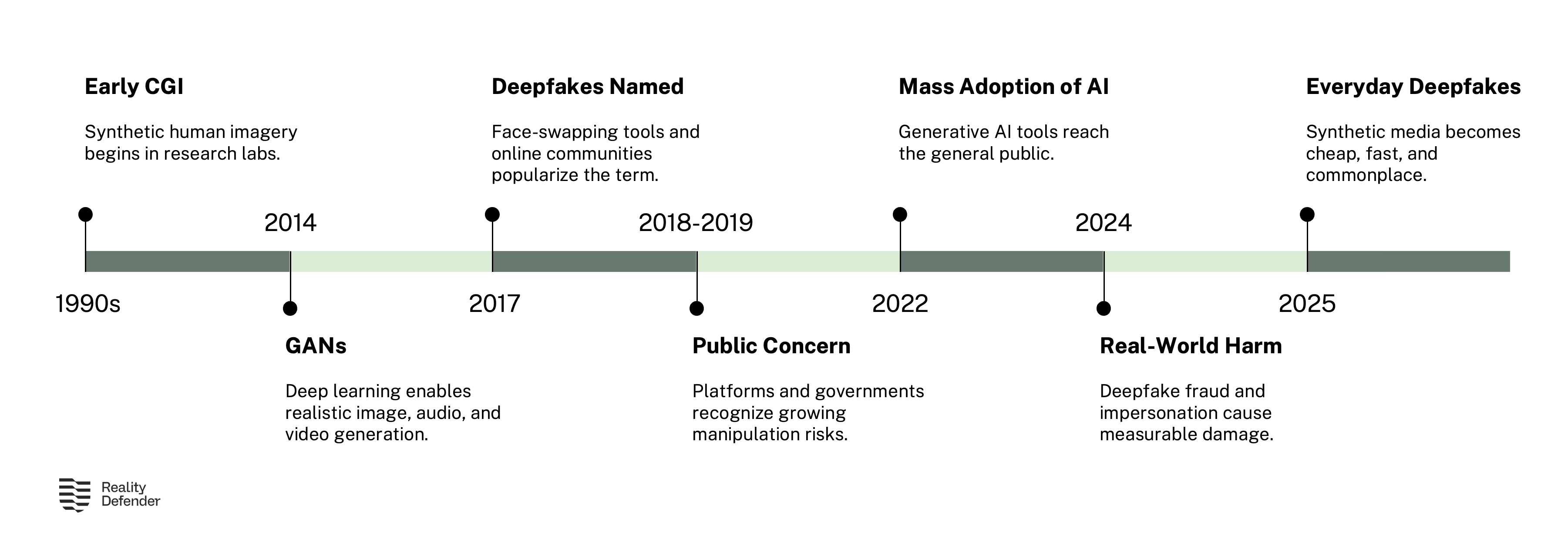

Chapter 1: The Rise of Synthetic Media: A History of Deepfakes

The concept of altering reality is not new, but the speed, scale, and realism of modern deepfakes are unprecedented. The technology's evolution has been rapid, moving from niche academic projects to a global security concern in just a few years. To understand where we are today, we must first understand how we got here.

The Academic Origins (1990s - 2016)

The foundational ideas behind deepfakes began in the academic world, rooted in the fields of computer vision and machine learning. As early as 1997, the "Video Rewrite" program demonstrated the ability to alter existing video footage of a person speaking to match a new audio track [3]. This was the first significant step towards automating facial reanimation, a concept that would later become central to deepfake technology. The program used machine learning techniques to make connections between the sounds produced by a video's subject and the shape of their face, allowing for the automatic generation of lip movements that matched a different audio source.

Throughout the early 2000s, researchers in computer vision continued to refine these techniques. The field made steady progress in areas like facial recognition, motion capture, and 3D modeling. However, a major breakthrough came in 2014 with the invention of Generative Adversarial Networks (GANs) by Ian Goodfellow and his colleagues at the University of Montreal. GANs involve two neural networks—a "generator" and a "discriminator"—competing against each other to create increasingly realistic images. This revolutionary architecture became the engine behind most modern deepfake creation, providing a powerful new method for generating synthetic content that was far more realistic than anything that had come before.

In 2016, the Face2Face project from Stanford University showcased an algorithm that could manipulate the facial expressions of a person in a YouTube video in real-time, transferring the expressions of another person [4]. The technology was impressive, demonstrating the ability to puppeteer a person's face in a video using only a standard webcam. While still largely confined to research labs, Face2Face was a clear sign of the disruptive potential of this technology.

The Spark of Public Awareness (2017)

The term "deepfake" was coined in late 2017 by a Reddit user of the same name. This user created an online community dedicated to swapping the faces of celebrities into pornographic videos using deep learning techniques. The phenomenon quickly went viral, bringing the technology's potential for malicious use into the public spotlight for the first time. The Reddit community was eventually banned, but the genie was out of the bottle. The term "deepfake" entered the public lexicon, and awareness of the technology's dangers began to spread.

Simultaneously, academic projects continued to push boundaries. The "Synthesizing Obama" project, published by researchers at the University of Washington, demonstrated how a photorealistic video of former President Barack Obama could be generated from an audio track [5]. By training a neural network on hours of Obama's speeches, the researchers were able to create a convincing video of him saying words he had never spoken. This was a stark warning of the potential for political disinformation, showing that even the most powerful figures in the world could be digitally impersonated.

The Explosion and Commercialization (2018 - Present)

Since 2018, deepfake technology has exploded in accessibility and sophistication. What once required specialized knowledge and powerful hardware can now be done with user-friendly apps on a smartphone. This democratization has led to a surge in both creative and malicious applications. The barrier to entry has dropped dramatically, meaning that virtually anyone with a computer and an internet connection can now create a deepfake.

The following timeline highlights some of the key developments in this period:

| Year | Event | Significance |

|---|---|---|

| 2018 | UC Berkeley's "Everybody Dance Now" project | Researchers developed a "dancing deepfake" that could make anyone appear to be a master dancer, expanding the technology beyond just faces to full-body manipulation [6]. |

| 2019 | The viral app Zao launches in China | Allowed users to convincingly place their faces into clips from famous movies in seconds, showcasing the speed and ease of modern deepfake creation. The app was downloaded millions of times within days. |

| 2020 | Channel 4's deepfake Queen Elizabeth II | A deepfake of Queen Elizabeth II delivered an alternative Christmas message on a UK television channel, designed to be a stark warning about the technology's deceptive power. |

| 2022 | Deepfake of President Zelenskyy | A deepfake video of Ukrainian President Zelenskyy appearing to surrender circulated widely in the early days of the war with Russia, demonstrating the technology's potential for political manipulation during a crisis [8]. |

| 2024 | $25.6 Million Hong Kong Fraud | A finance worker at a multinational firm was tricked into transferring over $25 million after attending a video conference with deepfaked versions of his colleagues, including the CFO [7]. This case marked a turning point, demonstrating the technology's potential for large-scale financial crime. |

| 2025 | Deepfakes reach mainstream awareness | With 60% of consumers reporting they have encountered a deepfake, and fraud incidents surging, the technology has become a mainstream concern for individuals, businesses, and governments alike [11]. |

This rapid progression from academic curiosity to a tool for multi-million dollar fraud underscores the urgent need for robust detection methods.

Chapter 2: How Are Deepfakes Made? The Technology Explained

Understanding how to fight deepfakes begins with a basic understanding of how they are created. While the underlying technology is complex, the core concepts are accessible. We will explore this without revealing sensitive technical details, in line with our commitment to security.

At its heart, creating a deepfake is a process of teaching an AI model what a person looks and sounds like, and then using that model to generate new content. The process relies on a branch of artificial intelligence known as deep learning, which uses artificial neural networks to learn patterns from large amounts of data.

The Engine: Generative Adversarial Networks (GANs)

As mentioned earlier, the most common method for creating deepfakes involves a Generative Adversarial Network (GAN). Think of it as a competition between two AIs, locked in a constant battle to outwit each other:

- The Generator: This AI's job is to create the fake image or video. It starts by producing random noise and gradually learns to create more realistic content based on the training data (e.g., thousands of pictures of a specific person's face). Its goal is to create fakes so convincing that they can fool the Discriminator.

- The Discriminator: This AI acts as the detective. Its job is to look at an image and decide whether it's real (from the training dataset) or fake (created by the Generator). It provides feedback to the Generator on how to improve, essentially teaching it what makes an image look fake.

This process repeats millions of times in a feedback loop. With each iteration, the Generator gets better at making fakes, and the Discriminator gets better at spotting them. The end result is a Generator that can produce highly convincing synthetic images that can fool even a trained eye. The adversarial nature of this process is what drives the rapid improvement in the quality of deepfakes.

Other Techniques: Autoencoders and Diffusion Models

While GANs are popular, other methods exist and are becoming increasingly common. Variational Autoencoders (VAEs) are another type of neural network often used for face-swapping. An autoencoder works by compressing an image into a smaller, encoded representation and then reconstructing it. For face-swapping, two autoencoders are trained—one on the source face and one on the target face—using a shared encoder. This allows the system to swap faces by encoding one face and decoding it with the other person's decoder. VAEs are generally faster to train than GANs but may produce slightly blurrier results.

More recently, Diffusion Models have emerged as a powerful alternative. These models work by gradually adding noise to an image until it becomes pure static, and then learning to reverse this process to generate new images from noise. Diffusion models have been responsible for the recent explosion in AI-generated art and are increasingly being used for deepfake creation due to their ability to produce highly detailed and realistic images.

Voice Cloning: The Audio Dimension

For audio deepfakes, or "voice cloning," the process is similar in principle. An AI model is fed hours of a person's speech. It learns the unique characteristics of their voice—pitch, cadence, accent, and emotional inflection—and can then be used to generate new audio of that person saying anything. Modern voice cloning technology has become remarkably accurate, requiring as little as a few minutes of audio to create a convincing clone. This has made audio deepfakes a particularly dangerous tool for fraud, as scammers can use them to impersonate executives or family members over the phone.

The Process: From Data to Deception

Regardless of the specific technique, the general workflow for creating a deepfake follows a similar pattern:

- Data Collection: The creator gathers as much source material as possible of the target person. For video, this means thousands of images of their face from different angles and with different expressions. For audio, it requires hours of clean speech recordings. The more data available, the higher the quality of the resulting deepfake.

- Training: The AI model is trained on this dataset. This is the most time-consuming part of the process, often requiring powerful computers running for days or even weeks. During training, the model learns to recognize and replicate the subtle features of the target's face and voice.

- Generation: Once the model is trained, it can be used to generate the deepfake. This could involve swapping a face onto a different body in a video, creating a new video from just a photo and a script, or generating a synthetic audio clip.

- Post-Processing: The raw output from the AI model often requires refinement. This can include color correction, blending the edges of the swapped face, and syncing the audio with the video. Skilled creators can use post-processing to make deepfakes even more convincing.

What's crucial to understand is that the quality of the deepfake is directly proportional to the amount of data and computing power used. However, as technology advances, the barrier to creating convincing fakes continues to lower. Tools that were once available only to researchers with access to supercomputers are now accessible to anyone with a modern laptop.

Chapter 3: The Societal Impact: A Rising Tide of Deception

The implications of deepfake technology extend far beyond simple mischief. It represents a fundamental threat to our information ecosystem, personal security, and societal trust. The number of malicious deepfakes is doubling every six months, creating a crisis of authenticity [1]. This chapter explores the wide-ranging consequences of this technology.

The Spectrum of Malicious Use

Deepfakes are being weaponized across a wide range of domains, affecting individuals, businesses, and governments:

| Domain | Description of Threat | Real-World Consequence |

|---|---|---|

| Financial Fraud | Impersonating executives to authorize fraudulent wire transfers or trick employees into revealing sensitive information. | A UK energy firm's CEO was tricked by a voice clone into transferring $243,000. A Hong Kong firm lost $25.6 million to a deepfake video conference call [7]. |

| Political Disinformation | Creating fake videos of politicians to manipulate public opinion, swing elections, or incite unrest. | A deepfake video of Ukrainian President Zelenskyy appearing to surrender circulated widely in the early days of the war with Russia [8]. |

| Reputation Damage & Blackmail | Creating non-consensual pornographic material or other compromising content to harass, intimidate, or extort individuals. | 96% of all deepfakes online are non-consensual pornography, overwhelmingly targeting women [9]. |

| Corporate Espionage | Impersonating employees to gain access to confidential meetings, data, or internal systems. | The threat of deepfakes being used to bypass biometric security systems like voice or face ID is a growing concern for security experts. |

| Erosion of Public Trust | The mere existence of deepfakes creates a "liar's dividend," where real evidence can be dismissed as fake, making it harder to hold anyone accountable. | This undermines journalism, the justice system, and the very concept of shared reality. |

The Human Cost: Non-Consensual Imagery

One of the most devastating uses of deepfake technology is the creation of non-consensual intimate imagery (NCII). Victims, who are overwhelmingly women, have their faces superimposed onto pornographic content without their knowledge or consent. This can cause severe psychological harm, damage reputations, and even lead to job loss or suicide. The ease with which these images can be created and distributed has made this a widespread problem, and the legal frameworks in many countries have struggled to keep pace with the technology.

The Alarming Statistics

The numbers paint a grim picture of the escalating threat:

- Explosive Growth: The number of deepfake files online surged from 500,000 in 2023 to a projected 8 million in 2025 [1].

- Rampant Fraud: Deepfake-related fraud attempts in North America grew by 1,740% between 2022 and 2023 [10].

- Widespread Exposure: 60% of consumers report having encountered a deepfake video in the past year [11].

- Economic Threat: The generative AI market, which powers deepfakes, is projected to grow to over $440 billion by 2031, indicating the vast resources being poured into this technology [12].

- Celebrity and Political Targeting: In the first quarter of 2025 alone, celebrities were targeted 47 times (an 81% increase from all of 2024), and politicians were targeted 56 times [15].

- Average Business Loss: In 2024, businesses lost an average of nearly $500,000 per deepfake-related incident [1].

This is not a future problem; it is happening now. The rapid advancement of this technology requires an equally rapid response from individuals and organizations.

The "Liar's Dividend"

Perhaps the most insidious consequence of deepfakes is what researchers call the "liar's dividend." As the public becomes more aware that any video or audio recording could be a deepfake, it becomes easier for bad actors to dismiss genuine evidence as fake. A politician caught on tape saying something incriminating can simply claim the recording is a deepfake. This erodes trust in all media, making it harder to establish a shared understanding of reality and hold people accountable for their actions. The very existence of deepfakes, therefore, harms society even when no specific deepfake is involved.

Chapter 4: How to Detect a Deepfake: A Practical Guide

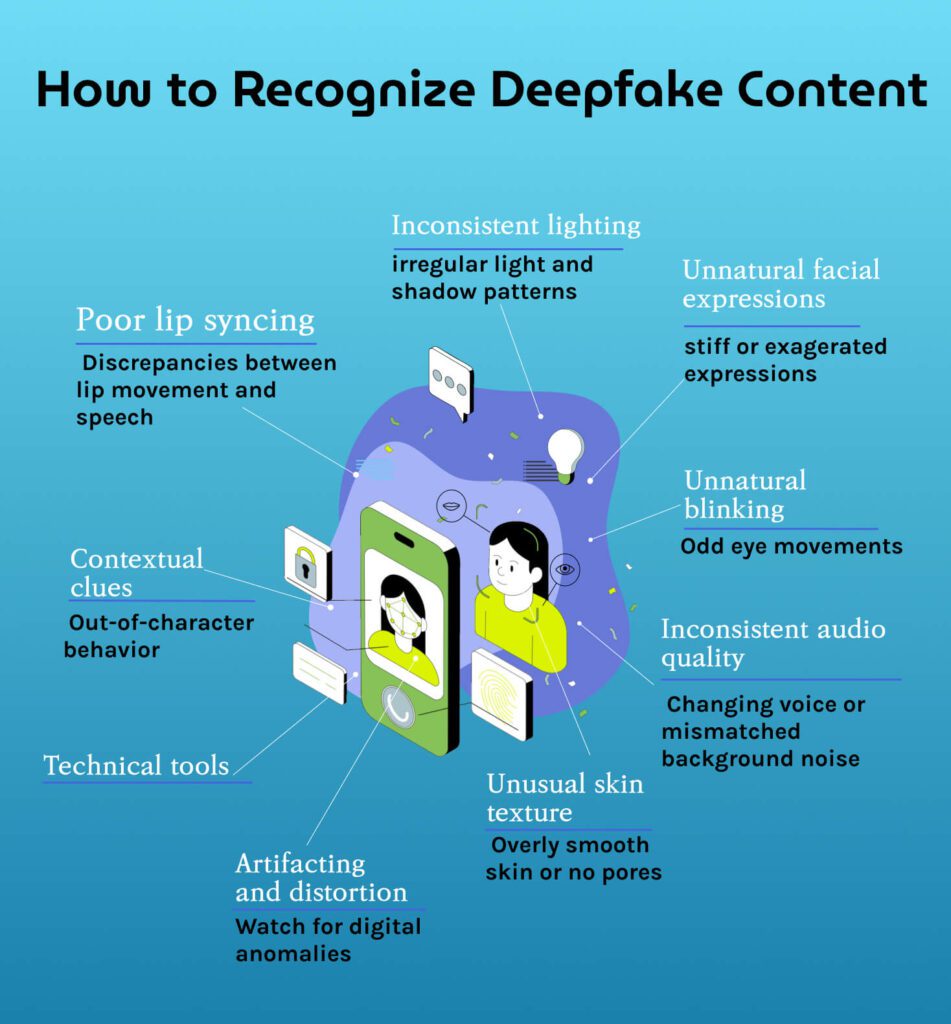

While the most sophisticated deepfakes can be nearly impossible to spot with the naked eye, many still contain subtle flaws and inconsistencies. Learning to recognize these tell-tale signs is a crucial skill in the modern digital landscape. This chapter provides a comprehensive guide to the visual, audio, and behavioral cues that can help you identify synthetic media.

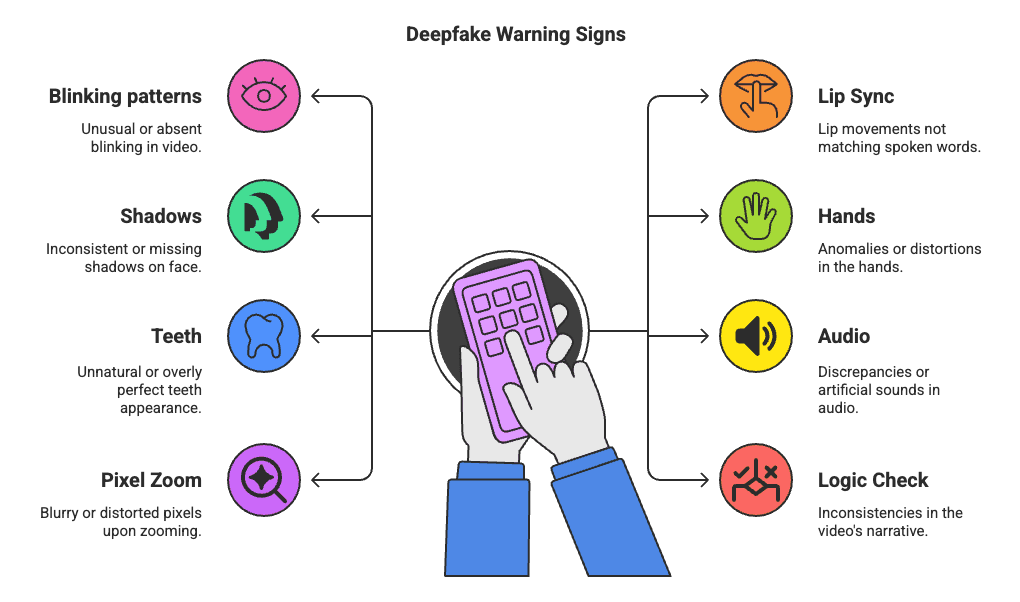

Here are the key areas to scrutinize when you suspect a video or image might be a deepfake:

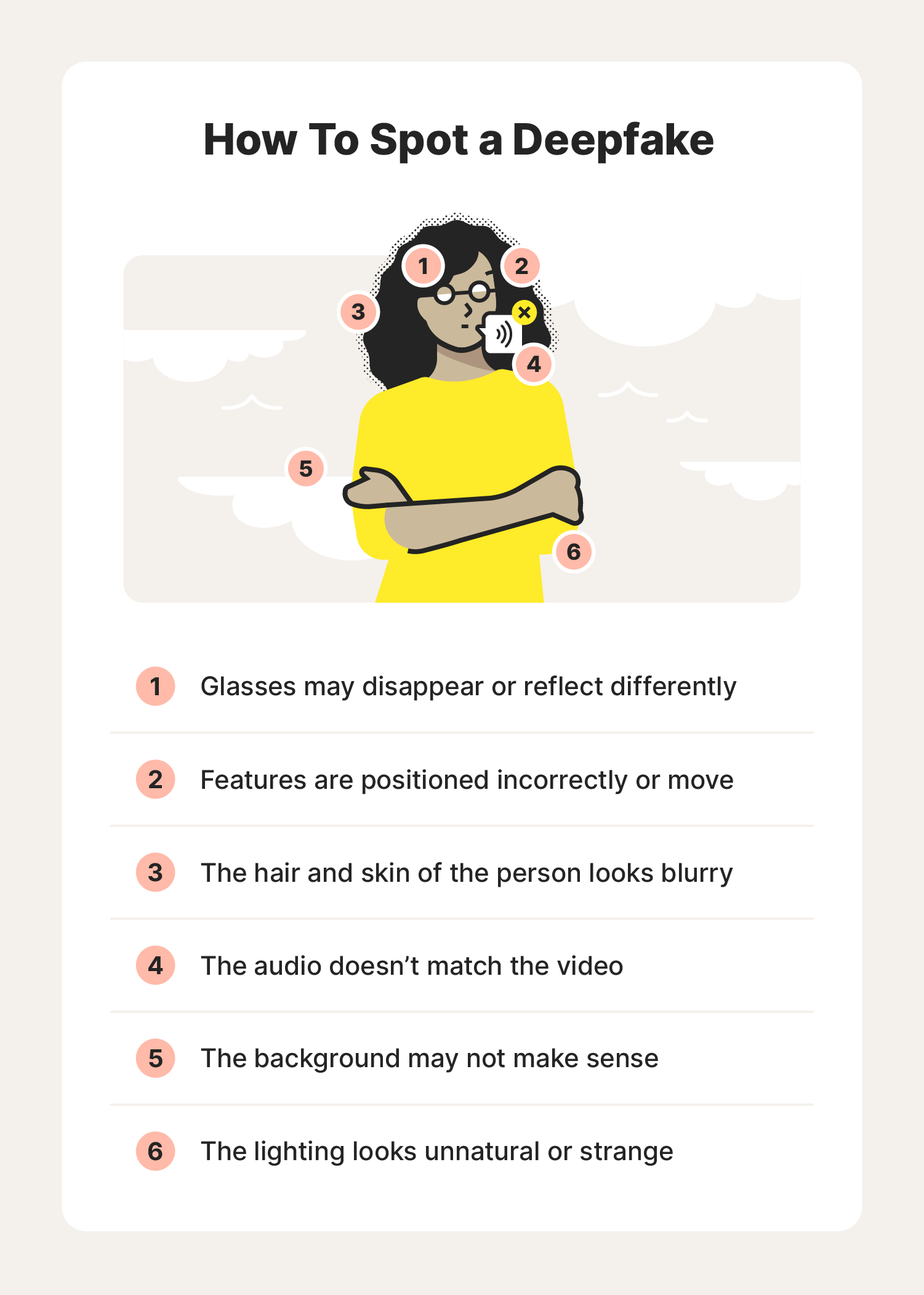

Visual Signs: What to Look For in Images and Video

1. Unnatural Eye Movement and Blinking: AI often struggles to replicate natural blinking. Look for blinking patterns that are too frequent, too infrequent, or seem mechanical. The eyes might also fail to track objects or people in the scene naturally. In some deepfakes, the eyes may appear lifeless or fail to reflect light correctly.

2. Inconsistent Facial Features: Pay close attention to the details. Do moles or freckles appear and disappear between frames? Are the teeth unnaturally perfect, or do they seem to change shape as the person speaks? AI models can struggle with maintaining the consistency of these small features across different frames. Look for teeth that morph, overlap, or have sharp, inconsistent edges.

3. Poor Lip-Syncing: This is one of the classic giveaways. The movement of the lips may not perfectly match the spoken words. The edges of the mouth might appear blurry or distorted as the person speaks. Pay attention to the corners of the mouth, which can warp unnaturally in deepfakes.

4. Awkward Facial Expressions: Deepfakes can have difficulty replicating the full range of human emotion. Expressions may seem stiff, exaggerated, or emotionally disconnected from the context of the conversation. The micro-expressions that humans naturally make are often absent or incorrect.

5. Blurry or Warped Edges: Look at the outline of the person's face and hair. You might notice blurring, flickering, or distortion where the deepfaked face meets the rest of the image or video. This is especially common around the hair and neckline, where the AI has to blend the synthetic face with the original body.

6. Unnatural Skin Texture: The skin might appear overly smooth, like a digital beauty filter has been applied, or it might look flat and lack the subtle pores and wrinkles of real human skin. The lighting on the face might also be inconsistent with the lighting in the surrounding environment.

7. Jewelry and Accessories: AI models often struggle with complex accessories like earrings, glasses, and necklaces. Look for jewelry that warps, disappears, or changes shape between frames. Glasses may show strange reflections or distortions.

8. Background Inconsistencies: While the focus is often on the face, the background can also provide clues. Look for objects that warp, blur, or change position unnaturally. The background may also appear overly smooth or lack detail.

Audio Signs: What to Listen For

9. Robotic or Monotonous Voice: Even with advanced voice cloning, the audio can sometimes sound flat, lack emotional inflection, or have a subtle robotic quality. The cadence and rhythm of speech might feel unnatural.

10. Strange Pauses or Emphasis: Listen for pauses that occur in unusual places or for emphasis being placed on random words in a sentence. This can indicate that the audio has been stitched together by an AI.

11. Inconsistent Background Noise: The ambient sound in the background should remain consistent. If the background noise suddenly changes, disappears, or doesn't match the visual environment, it could be a red flag.

12. Breathing and Natural Sounds: Real human speech includes natural sounds like breathing, lip smacking, and throat clearing. These are often absent or sound artificial in voice clones.

Behavioral and Contextual Signs

13. Trust Your Instincts: Often, the first sign that something is wrong is a simple gut feeling. If the person's behavior seems out of character, the message feels unusually urgent or strange, or the whole situation just feels "off," it's worth investigating further.

14. Verify Through Other Channels: If you receive a suspicious video call or voice message from someone you know, hang up and call them back on a known, trusted number. If a video of a public figure seems questionable, check reputable news sources to see if it has been verified or debunked.

15. Consider the Source: Where did the video or image come from? Is it from a reputable source, or did it appear on an anonymous social media account? Be especially skeptical of content that appears suddenly and is designed to provoke a strong emotional reaction.

While these tips can help, the only way to be certain is to use a dedicated detection tool. For high-stakes situations, relying on human observation alone is a significant risk.

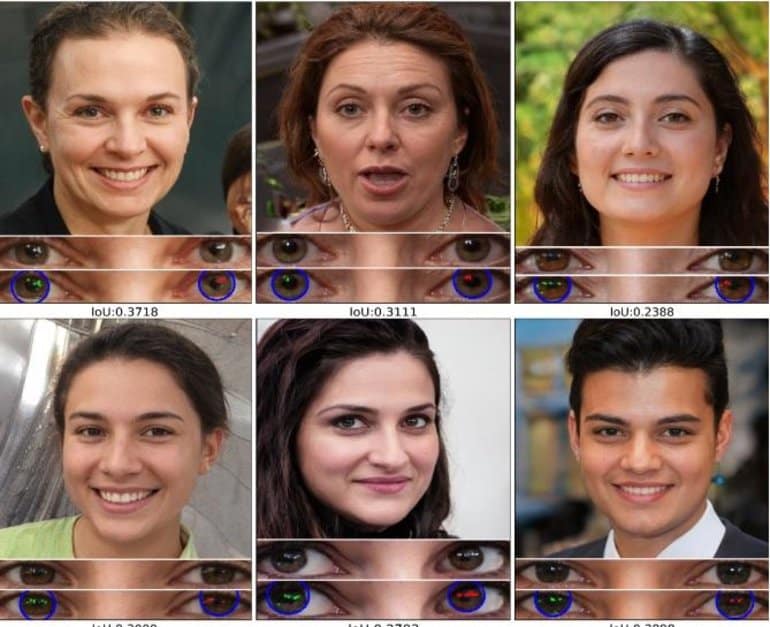

Chapter 5: The Arms Race: Technology Fighting Back

As deepfake technology becomes more sophisticated, the need for automated, reliable detection has become critical. A new industry is emerging, dedicated to building the tools necessary to fight back against synthetic media and restore digital trust. VerifyReal is at the forefront of this battle.

Human detection, while a useful first line of defense, is no longer sufficient. The best deepfakes are designed to fool our senses. To combat them, we need technology that can see the invisible artifacts and statistical inconsistencies that AI creators leave behind.

How Deepfake Detection Technology Works

Modern detection platforms don't rely on a single method. Instead, they employ a multi-layered AI analysis approach, creating a robust defense that is difficult for fakers to bypass. This is similar to how cybersecurity uses multiple layers of protection (firewalls, antivirus, intrusion detection, etc.) to create a defense-in-depth strategy.

At VerifyReal, our system is built on the principle of an advanced AI ensemble. This means multiple, specialized AI models work together, each looking for different types of clues. This approach is far more effective than relying on a single algorithm, as it can catch a wider range of deepfakes and is more resilient to new creation techniques.

Our layers of analysis include:

- Forensic-Grade Analysis: This layer examines the digital fingerprint of an image or video. It looks for subtle clues in the pixels, compression data, and other metadata that are invisible to the human eye but can reveal manipulation. Every time an image is edited or processed, it leaves behind traces that forensic analysis can detect.

- AI-Generated Content Detection: Specialized models trained on millions of real and fake images learn to recognize the statistical patterns unique to AI-generated content. They can spot artifacts that even the creators may not know are there. These models are constantly updated to keep pace with the latest generation techniques.

- Manipulation Detection: This layer focuses on identifying more traditional forms of editing, such as splicing, cloning, or removing objects from a scene. Even if a deepfake is created using advanced AI, it may still be combined with traditional editing techniques that can be detected.

- Explainable AI Results: A key part of our philosophy is providing not just a "real" or "fake" verdict, but showing why. Our system generates visual heatmaps that highlight the specific areas of an image or video that are suspected of being manipulated, empowering users to make informed decisions.

This multi-dimensional analysis ensures that even as one type of deepfake creation becomes more advanced, other layers of detection can still catch it. It's a constantly evolving arms race, and our commitment is to continuous model improvement to stay ahead of the threat.

The Importance of Privacy-First Design

In the fight for digital trust, user privacy is paramount. That's why platforms like VerifyReal are built with a zero data retention policy. When you upload an image for analysis, it is processed in real-time and then immediately deleted. We don't store your data, ensuring that your sensitive information remains yours alone. This is especially important for journalists, lawyers, and businesses who may be analyzing sensitive material.

The Limitations of Detection

It's important to be realistic about the limitations of deepfake detection. The technology is in a constant arms race with deepfake creation. As detection methods improve, so do the techniques used to create deepfakes. No detection system is 100% accurate, and the most sophisticated deepfakes can sometimes evade detection. This is why a multi-layered approach, combining technology with human judgment and verification protocols, is essential.

Chapter 6: Protecting Yourself and Your Organization

Understanding the threat is the first step. The next is taking concrete action to protect yourself and your organization. This chapter provides practical guidance for individuals and businesses.

For Individuals: Building Your Digital Defenses

- Be Skeptical: Approach all online media with a healthy dose of skepticism, especially if it seems designed to provoke a strong emotional reaction. Ask yourself: Who created this? What is their motive? Is this too good (or too bad) to be true?

- Verify Before You Share: Before sharing a video or image on social media, take a moment to verify its authenticity. Check if reputable news organizations have reported on it. Use reverse image search tools to see if the image has been used elsewhere.

- Use Detection Tools: For important decisions, don't rely on your eyes alone. Use a deepfake detection tool like VerifyReal to analyze suspicious content.

- Protect Your Digital Footprint: The more images and audio of you that are publicly available online, the easier it is for someone to create a deepfake of you. Be mindful of what you share on social media.

- Establish Verification Protocols with Family: Agree on a secret code word or question with family members that can be used to verify identity in case of a suspicious phone call or video chat.

For Businesses: Implementing a Deepfake Defense Strategy

- Educate Your Employees: Train all employees, especially those in finance and HR, to recognize the signs of deepfake fraud. Conduct regular awareness campaigns and simulated phishing exercises.

- Implement Multi-Factor Authentication for Financial Transactions: Never authorize large wire transfers based solely on a phone call or video conference. Require multiple levels of approval and verification through independent channels.

- Establish Verification Protocols: Create clear protocols for verifying the identity of anyone requesting sensitive information or financial transactions. This should include call-back procedures to known phone numbers.

- Invest in Detection Technology: Integrate deepfake detection tools into your security infrastructure. This can help identify fraudulent communications before they cause damage.

- Develop an Incident Response Plan: Have a plan in place for how to respond if your organization is targeted by a deepfake attack. This should include communication strategies, legal considerations, and steps for investigating the incident.

- Monitor Your Brand: Use media monitoring services to track mentions of your company and executives online. This can help you identify deepfake attacks early and respond quickly.

Chapter 7: The Future of Digital Trust

The rise of deepfakes is more than a technological challenge; it's a societal one. It forces us to confront difficult questions about the nature of evidence, trust, and reality in the digital age. As we look to the future, the battle against synthetic media will be fought on three main fronts: technology, education, and policy.

The Technological Horizon

The arms race between deepfake creation and detection will only accelerate. We can expect several key developments in the coming years:

- Real-Time Detection: The next frontier is the ability to detect deepfakes live, during a video call or broadcast. This is a monumental technical challenge but is essential for preventing real-time fraud. Companies are already working on solutions that can analyze video streams in real-time and flag potential deepfakes.

- Proactive Authentication: Instead of just detecting fakes, new technologies will focus on authenticating real content at the source. This could involve digital watermarking or cryptographic signatures embedded in cameras and recording devices. The Coalition for Content Provenance and Authenticity (C2PA) is developing standards for this approach.

- Expansion to New Modalities: The focus will expand beyond video and audio to include text, 3D models, and even virtual reality environments. As AI becomes capable of generating more types of content, detection methods will need to keep pace.

- Improved Accessibility: Detection tools will become more accessible and user-friendly, allowing anyone to verify the authenticity of content with a few clicks.

The Educational Imperative

Technology alone is not a silver bullet. A digitally literate populace is our best long-term defense. Media literacy education needs to be a core part of school curricula, teaching students from a young age to critically evaluate the information they consume online. Public awareness campaigns are needed to educate everyone on the signs of deepfakes and the importance of verification. This includes teaching people not just how to spot fakes, but also how to think critically about the information they encounter online.

The Policy and Regulatory Landscape

Governments and regulatory bodies are beginning to grapple with the legal and ethical challenges of deepfakes. We are likely to see:

- Stricter Laws: New legislation will impose severe penalties for the creation and distribution of malicious deepfakes, particularly in areas like non-consensual pornography and election interference. Several countries and US states have already passed laws targeting deepfakes.

- Platform Accountability: Social media and content platforms will face increasing pressure to implement robust detection and moderation policies to prevent the spread of harmful synthetic media. This may include requirements for labeling AI-generated content.

- International Cooperation: Since deepfakes know no borders, international agreements will be necessary to combat their creation and distribution globally.

Ultimately, the future of digital trust relies on a collaborative effort. It requires technology companies to build responsible and effective tools, educators to empower the public with knowledge, and policymakers to create a legal framework that protects citizens without stifling innovation.

Conclusion: Reclaiming Reality

We stand at a crossroads. The same technology that can be used to create stunning art and entertainment can also be used to commit devastating fraud and tear at the fabric of our society. The era of passive media consumption is over. We must all become active, critical participants in our information ecosystem.

This guide has provided you with the knowledge to understand the history of deepfakes, the technology behind them, their societal impact, and the practical steps you can take to identify them. But knowledge is only the first step.

"In a world of deepfakes, trust is the new currency."

In high-stakes situations, the human eye is not enough. The future of truth depends on reliable, accessible, and privacy-focused technology to verify the authenticity of digital content.

Don't be a victim of deception. Take the next step in securing your digital reality.

Try VerifyReal for Free and Analyze Your First Image Now

Frequently Asked Questions (FAQ)

Q: What is a deepfake? A: A deepfake is a piece of synthetic media—typically a video, image, or audio clip—that has been created or manipulated using artificial intelligence. The term is a combination of "deep learning" (a type of AI) and "fake."

Q: How can I tell if a video is a deepfake? A: Look for visual inconsistencies like unnatural blinking, poor lip-syncing, blurry edges around the face, and overly smooth skin. Listen for audio cues like a robotic voice or strange pauses. For high-stakes situations, use a dedicated detection tool.

Q: Are deepfakes illegal? A: The legality of deepfakes varies by jurisdiction and depends on how they are used. Creating and distributing non-consensual pornographic deepfakes is illegal in many places. Using deepfakes for fraud or to interfere with elections is also illegal. However, creating deepfakes for satire, entertainment, or art is generally legal.

Q: Can deepfakes be detected? A: Yes, deepfakes can often be detected using a combination of human observation and AI-powered detection tools. However, the technology is constantly evolving, and the most sophisticated deepfakes can be difficult to identify.

Q: How can I protect myself from deepfake fraud? A: Be skeptical of unexpected requests for money or sensitive information, especially if they come via video call or voice message. Verify the identity of the person through an independent channel. Use strong verification protocols for financial transactions.

References

[1] Deepstrike.io (2025). Deepfake Statistics 2025: AI Fraud Data & Trends. https://deepstrike.io/blog/deepfake-statistics-2025

[2] Reality Defender. A Brief History of Deepfakes. https://www.realitydefender.com/insights/history-of-deepfakes

[3] Bregler, C., Covell, M., & Slaney, M. (1997). Video Rewrite: Driving Visual Speech with Audio. SIGGRAPH 1997.

[4] Thies, J., Zollhofer, M., Stamminger, M., Theobalt, C., & Nießner, M. (2016). Face2Face: Real-time Face Capture and Reenactment of RGB Videos. CVPR 2016.

[5] Suwajanakorn, S., Seitz, S. M., & Kemelmacher-Shlizerman, I. (2017). Synthesizing Obama: Learning Lip Sync from Audio. ACM Transactions on Graphics.

[6] Chan, C., Ginosar, S., Zhou, T., & Efros, A. A. (2018). Everybody Dance Now. ICCV 2019.

[7] CNN (2024). Finance worker pays out $25 million after video call with deepfake 'CFO'. https://www.cnn.com/2024/02/04/asia/deepfake-cfo-scam-hong-kong-intl-hnk

[8] The Guardian (2022). Deepfake of Zelenskyy telling Ukrainians to 'lay down arms' debunked.

[9] Sensity AI (2021). The State of Deepfakes.

[10] World Economic Forum (2025). Detecting dangerous AI is essential in the deepfake era. https://www.weforum.org/stories/2025/07/why-detecting-dangerous-ai-is-key-to-keeping-trust-alive/

[11] Eftsure (2025). Deepfake statistics (2025): 25 new facts for CFOs. https://www.eftsure.com/statistics/deepfake-statistics/

[12] UNESCO (2025). Deepfakes and the crisis of knowing. https://www.unesco.org/en/articles/deepfakes-and-crisis-knowing

[13] CloudGuard (2026). How to Spot A Deepfake: Signs Everyone Should Know in 2026. https://cloudguard.ai/resources/how-to-spot-a-deepfake/

[14] Experian. How businesses can detect and mitigate deepfake attacks.

[15] Surfshark (2025). Deepfake statistics 2025: how frequently are celebrities targeted. https://surfshark.com/research/study/deepfake-statistics

Related Articles

- 5 Telltale Signs an Image is AI-Generated

- How VerifyReal's Technology Works: A High-Level Overview

- Case Study: The $25 Million Deepfake Fraud

- A Guide for Parents: Talking to Your Children About Deepfakes

Have questions or want to learn more about enterprise-level protection? Contact our team.