How VerifyReal's Technology Works: A High-Level Overview

Reading Time: 7 minutes | Category: Product Updates

In a world filled with AI-generated images and deepfakes, how can you know what's real? At VerifyReal, we're building the technology to restore trust in digital media. We often get asked how our system works—how we can detect sophisticated fakes with such high accuracy in just a few seconds.

While we can't reveal the specific, proprietary algorithms that give us our competitive edge, we believe in transparency. This article provides a high-level overview of our detection philosophy and the multi-layered approach that powers VerifyReal.

Our core principle is simple: no single detection method is enough. To stay ahead of the rapidly evolving world of synthetic media, we use an advanced AI ensemble that combines multiple layers of analysis. Think of it like a team of world-class detectives, each with a unique specialty, working together to solve a case.

The Power of Layered Analysis

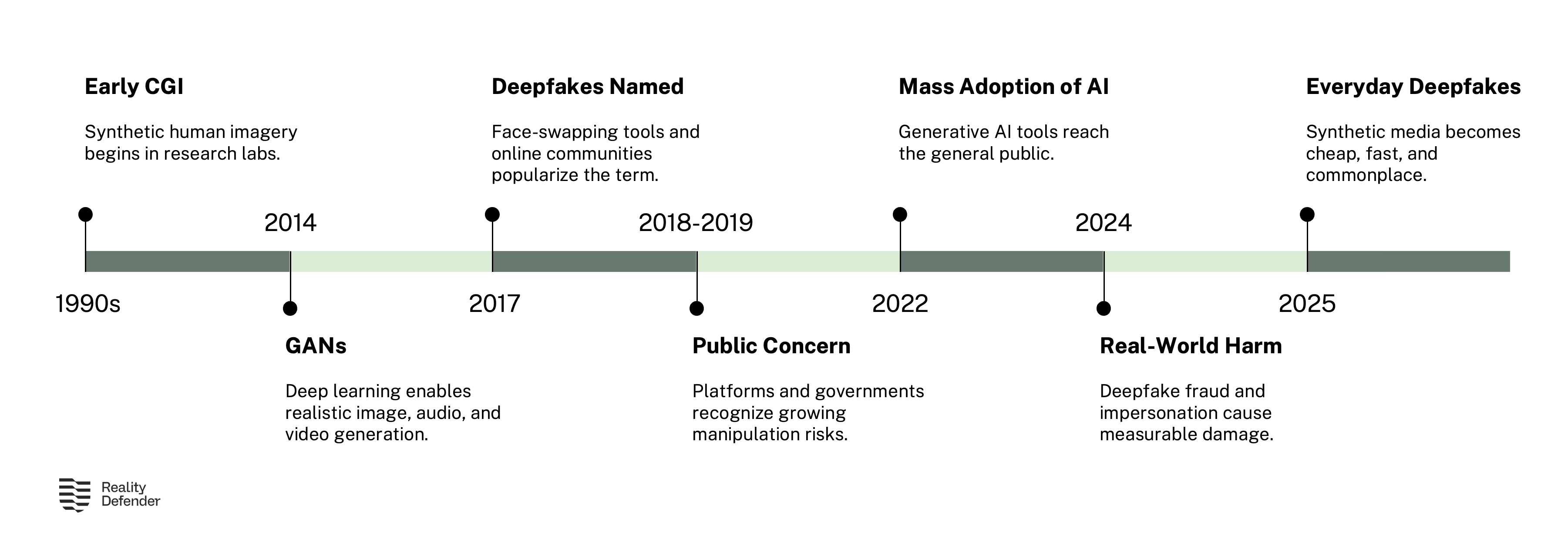

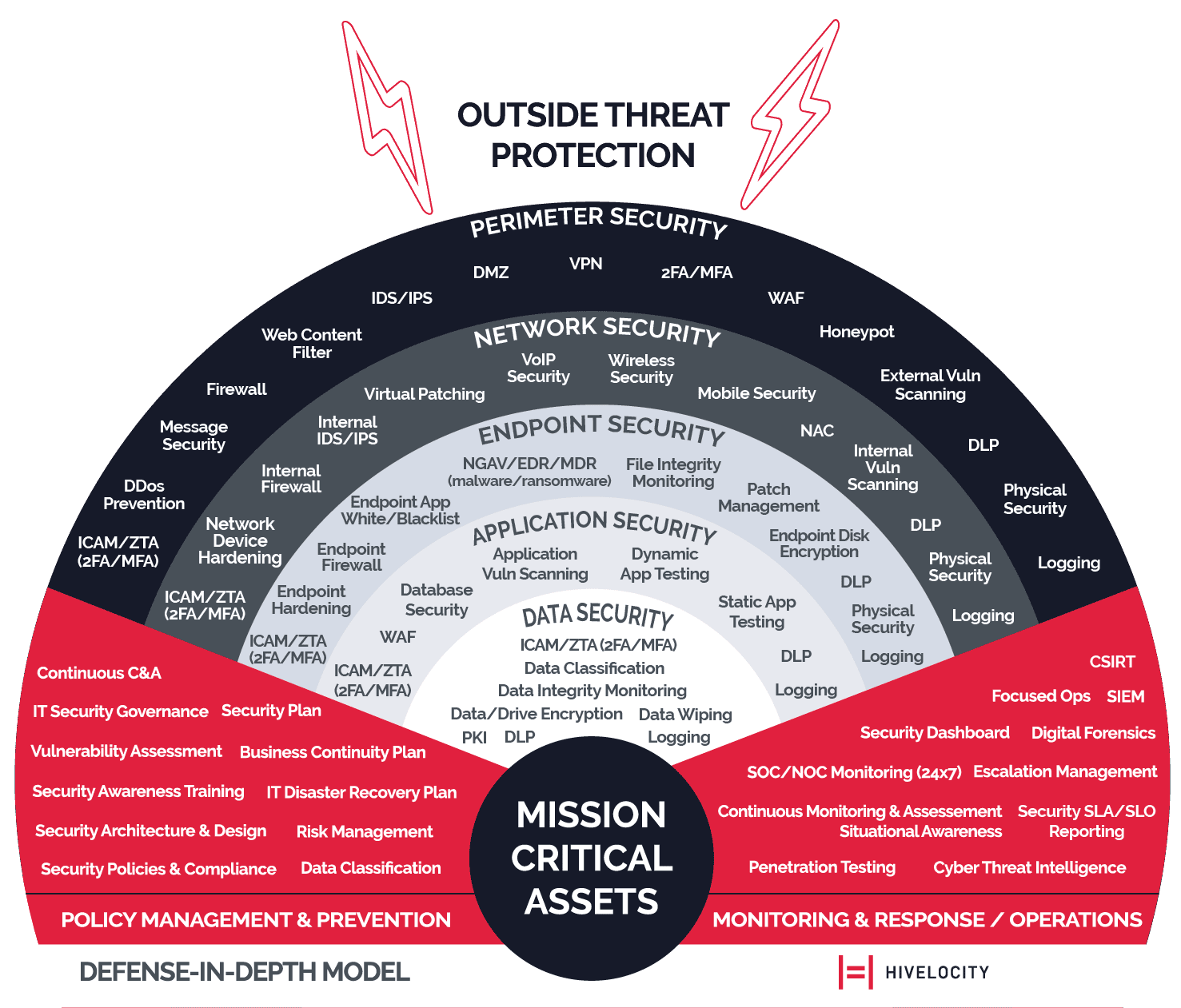

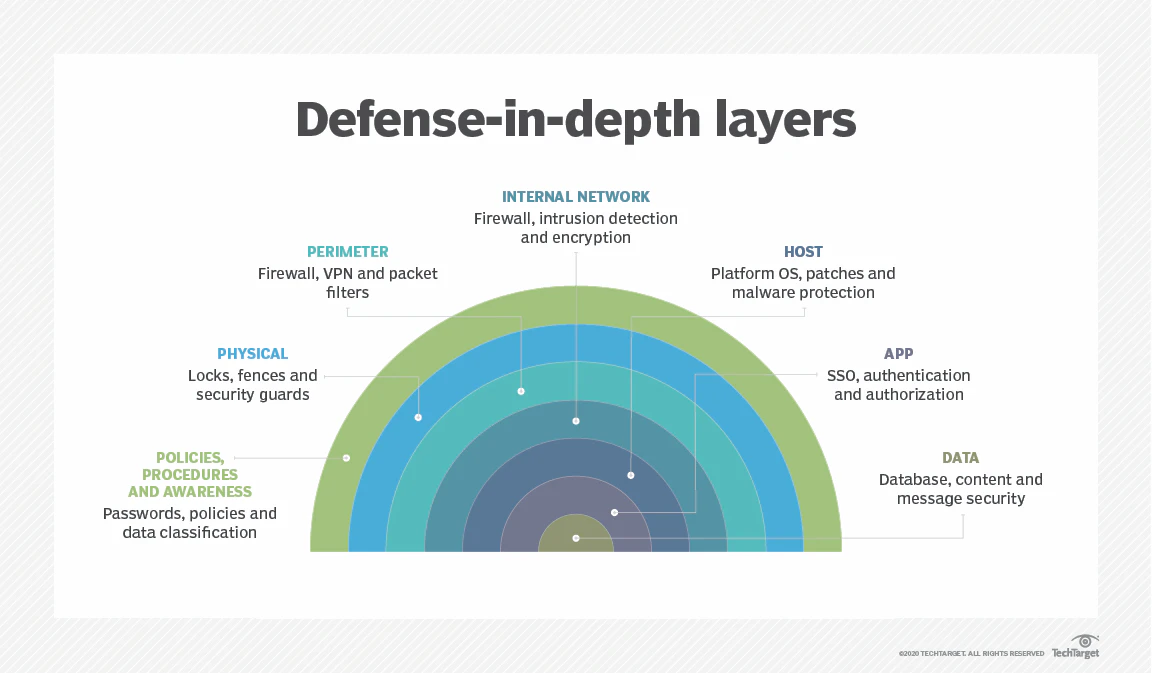

The concept of layered security, or "defense-in-depth," is a cornerstone of modern cybersecurity. Instead of relying on a single point of defense, multiple independent layers are used. If an attacker bypasses one layer, they are likely to be caught by another.

We apply this same philosophy to deepfake detection.

Our system is not just one AI model; it's a carefully orchestrated suite of proprietary algorithms that analyze an image from different perspectives. This multi-dimensional analysis allows us to catch everything from crude photoshops to state-of-the-art, AI-generated deepfakes.

Let's break down our three core detection layers.

Layer 1: Forensic-Grade Analysis

Before we even let our AI models look at the visual content of an image, we start with forensics. Every digital image has a hidden history—a set of data that tells a story about where it came from and how it was made. Our forensic analysis layer acts like a digital archaeologist, examining the file's underlying structure for signs of tampering.

What We Look For:

- Digital Fingerprints: We analyze the invisible artifacts left behind by cameras, editing software, and social media platforms. These digital fingerprints can reveal if an image has been altered after it was originally created.

- Metadata Inconsistencies: We examine the file's metadata (data about the data) for clues. For example, if an image claims to be an original photo from a smartphone but contains metadata from a known editing program, it's a red flag.

- Compression Analysis: Every time a JPEG image is saved, it loses a small amount of quality. By analyzing the compression levels across an image, we can often identify areas that have been edited or inserted from a different source.

This layer is our first line of defense. It's designed to catch more traditional forms of manipulation and to provide foundational data for the AI layers that follow. It allows us to understand the image's provenance—its origin and history—which is a critical piece of the puzzle.

Layer 2: The Advanced AI Ensemble

This is the heart of VerifyReal's detection engine. Our advanced AI ensemble is not a single model, but a team of specialized machine learning models working in concert. Each model is trained to look for different types of manipulation, and their combined judgment is far more powerful than any single algorithm.

How It Works:

- Specialized Detectives: We have models trained specifically to recognize the subtle patterns left by different AI image generators. Other models are experts at spotting face swaps, while others focus on identifying cloned or removed objects.

- Adversarial Training: Our models are constantly trained against the latest deepfake creation techniques. We essentially use AI to fight AI, creating a system that learns and adapts to new threats as they emerge.

- Cross-Validation: The results from each model in the ensemble are cross-validated against the others. This reduces the chance of false positives and ensures that a detection is confirmed by multiple independent lines of evidence.

This ensemble approach is what allows us to achieve 95-98% accuracy across a wide range of image types. It makes our system resilient; even if a new deepfake technique manages to fool one model, the others are there to catch it.

Layer 3: Explainable AI Results

For us, it's not enough to just give you a "real" or "fake" verdict. Trust requires transparency. That's why our final layer is focused on explainable AI (XAI). We believe you have the right to know why our system made the decision it did.

What You See:

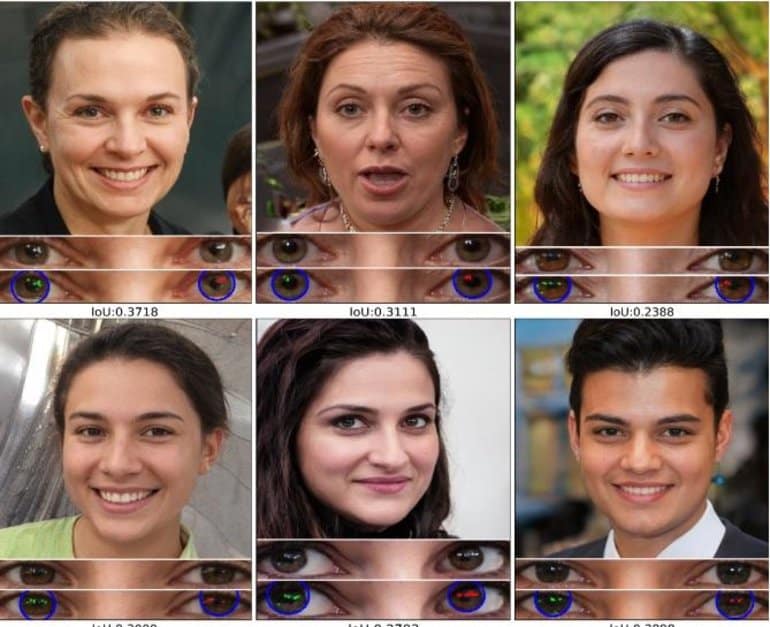

- Visual Heatmaps: Instead of a simple percentage score, VerifyReal generates a visual heatmap that overlays the original image. This heatmap highlights the specific areas that our AI has identified as suspicious. A red glow over a person's eyes or mouth instantly shows you where the manipulation was detected.

- Clear, Simple Language: We translate the complex findings of our AI into a simple, human-readable report. We'll tell you if we detected signs of AI generation, face swapping, or other forms of manipulation in plain English.

- Actionable Insights: Our results are designed to empower you to make an informed decision. The combination of a clear verdict, a visual heatmap, and a simple explanation gives you the context you need to assess the risk.

This commitment to explainability is a core part of our mission. We're not a black box; we're a tool for illumination, designed to bring clarity to a world of digital deception.

Conclusion: Trust Through Transparency

VerifyReal's power comes from its multi-layered detection system. By combining forensic-grade analysis, an advanced AI ensemble, and explainable results, we provide a robust, resilient, and transparent solution for detecting deepfakes and AI-generated content.

Our technology is constantly evolving to stay ahead of new threats, but our core principles remain the same:

- Accuracy: Deliver reliable results you can count on.

- Speed: Provide real-time analysis in under 3 seconds.

- Privacy: Protect your data with a zero-retention policy.

- Transparency: Show you not just what we found, but why.

In the fight against digital misinformation, you need a partner you can trust. We hope this high-level overview gives you a clearer understanding of the technology and philosophy that powers VerifyReal.

Ready to see our technology in action?

Try VerifyReal for Free and Analyze Your First Image Now

Related Articles

- The Complete Guide to Deepfake Detection

- 5 Clear Signs of a Deepfake (And How to Spot Them)

- Case Study: The $25 Million Deepfake Fraud

References

[1] Hivelocity. (2022). Infographic: Defense in Depth Security.

[2] Imperva. (2023). What Is Defense In Depth? Best Practices For Layered Security.